Many Embedded Systems Qualify as AI Under the EU AI Act — Does Yours?

(If you prefer video content, please watch the concise video summary of this article below)

Key Facts

- Many embedded products already qualify as AI systems under the EU AI Act if they use data-driven inference or trained models.

- An AI system can be classified as high-risk if it is a safety component of a regulated product or used in specific Annex III applications.

- Companies that place a product with AI functionality on the market may be considered AI system providers, even if the model comes from a third party.

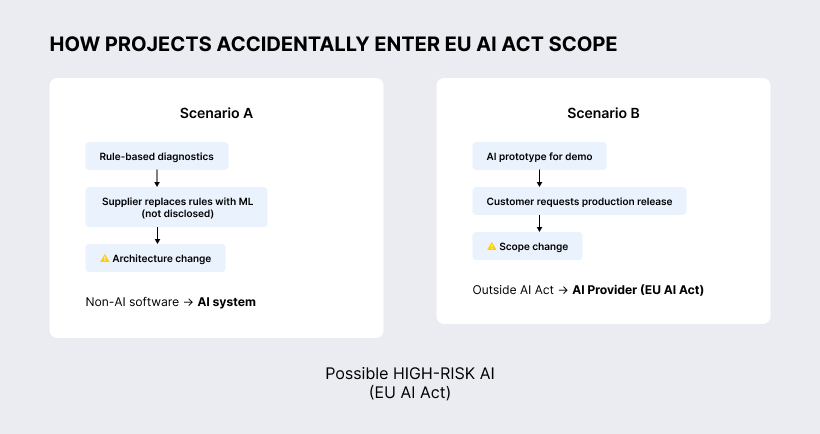

- Products can unintentionally enter AI Act scope during development — for example when rule-based logic is replaced with machine learning or when a prototype becomes a product.

- High-risk AI systems require documentation, risk management, transparency measures, monitoring, and in some cases conformity assessment before market entry.

The EU AI Act is often associated with large AI platforms, cloud models, or data-science companies. But the regulation reaches much further. Many embedded products already fall within its scope — sometimes without teams realizing it. If your device includes data-driven inference, predictive logic, or trained models, it may legally qualify as an AI system under the Act. Understanding where embedded software crosses the line into AI is now a critical step for engineering and product teams across Europe.

Leverage AI to transform your business with custom solutions from SaM Solutions’ expert developers.

The Assumption Many Embedded Teams Make

Many embedded teams still think the EU AI Act is for AI labs and SaaS companies, not for firmware teams. I understand why. In embedded projects, we usually talk about firmware, drivers, diagnostics, and maybe backend integrations. We usually do not expect anyone to call this an “AI System”. And under the EU AI Act, function matters more than naming. If your system uses inference from data-driven models, you may already be in scope, and you might have missed it.

A Brief Timeline

| Date | Milestone |

| August 1, 2024 | AI Act entered into force |

| February 2, 2025 | Prohibited AI practices (Article 5) banned |

| August 2, 2025 | GPAI model obligations apply |

| August 2, 2026 | High-risk AI rules apply (Annex III categories) |

| August 2, 2027 and later | Full application; extended window for Annex I products closes |

One thing worth noting: in early 2025, the European Commission proposed the Omnibus Simplification Package, which includes amendments to the AI Act intended to reduce administrative burden for smaller operators. The package has not been enacted yet. As of March 2026, the Digital Omnibus package is still a proposal. It may adjust timing and reduce some procedural burden, especially where standards are not ready. But it does not remove the core logic of classification or provider obligations.

For embedded teams in regulated product domains, scope is still the main question to solve early.

Three Definitions That Change Your Situation

To understand whether your product falls under the EU AI Act, three definitions are particularly important.

AI system

The Act defines an AI system as a machine-based system that can operate with some autonomy and infer from input how to produce outputs such as predictions, classifications, recommendations, or decisions.

In practical terms, the important line is simple: if behavior comes from patterns learned from data, you are in AI territory. If behavior follows fully predefined, human-written rules, you are usually in conventional software territory. A hard-coded threshold in firmware is not AI. A small trained model for anomaly detection is Artificial Intelligence, even if it is tiny and open-source and runs fully on-device.

High-risk AI system

An AI system can become high-risk in two common ways:

- it is a safety component of a product regulated under Annex I legislation (for example medical or machinery contexts), or

- it is used in a standalone Annex III use case (for example employment, critical infrastructure, access to essential services).

For embedded teams, the first path is often the relevant one.

Provider vs. Deployer

Provider is the entity that develops an AI system and places it on the market, or puts it into service, under its own name or trademark. Deployer is the entity that uses an AI system under its authority in a professional context. Responsibilities split across the chain. But there is a catch as well: if you ship the system with an AI function that fully relies on a third party, it does not exempt you from coverage. You are still a Provider of an AI system.

How Embedded Products Accidentally Enter EU AI Act Scope

The transition from conventional software to an AI system often happens quietly during normal product development.

Scenario A: The Supplier Who Said Yes

You manufacture a device with self-diagnostic monitoring: overload detection, basic self-checks. Rule-based, conventional software. Firmware and backend are developed by an outsourcing supplier.

Analytics on the backend are basic. You ask for improvements: better reliability, fewer false positives. Supplier agrees, no questions asked. Next release ships. Performance is genuinely better. You update your fleet.

What you were not told: supplier replaced rule-based diagnostic logic with a trained ML model. The improvement was real. The architectural decision was undisclosed. Your product is now an AI system. If your device operates in industrial safety or regulated context, you may indeed now be a Provider of a High-Risk AI system, with documentation, testing, and conformity obligations you never planned for.

Supplier implemented your requirement. That was the scope of the engagement. A development partner takes a different position: they flag when implementation choices carry regulatory or architectural consequences, propose alternatives, and do not let compliance-relevant decisions pass without your awareness. The difference between supplier and partner is not the quality of the code. It is the ownership of the outcome.

Scenario B: The POC That Shipped

You built a working prototype for a pitch: a small classifier to demonstrate predictive capability to a prospective customer. It worked. The customer was impressed and asked you to ship it as a product.

Before that conversation, you were not in scope for the AI Act. After it, you are, as the Provider of an AI system, potentially in the High-Risk category depending on the customer’s application context.

An experienced partner would have raised these two questions before the pitch — not after the contract:

- First, the alternative question: does this use case actually require a trained model, or can a well-designed rule-based approach deliver the same outcome? If yes, you stay out of AI Act scope entirely. That is a legitimate engineering trade-off, and it requires someone who understands both technical and regulatory dimensions before a demo becomes a commitment.

- Second, the compliance path: if AI is the right answer, what does the road to market look like? Technical documentation, risk management, conformity assessment, these all have timelines and cost. Knowing this before signing is worth considerably more than knowing it after.

What High-Risk Means in Practice

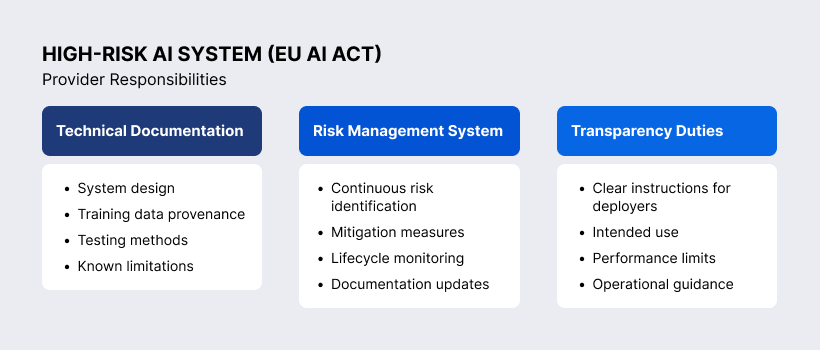

If your system is high-risk and you are a Provider, you should expect work in these areas:

- technical documentation covering system design, training data provenance, testing methodology, and known limitations;

- a risk management system maintained throughout the product lifecycle;

- transparency obligations toward Deployers, including instructions for intended use and performance boundaries;

- post-market monitoring and incident logging;

- for some product categories, conformity assessment by a notified body before market entry.

For some categories, notified body involvement may be required. For others, self-assessment may be possible under defined conditions.

AI literacy obligations also extend beyond high-risk systems. Teams building, integrating, selling, or operating AI systems should have a role-appropriate understanding of risks and responsibilities.

And building this retroactively, after the product is already in the field, is substantially more expensive than integrating it into the development process from the start.

How SaM Solutions Can Help

We develop embedded firmware, BSPs, and backend systems for clients across regulated and industrial sectors. Our engagement model is built around partnership, not task execution. We take ownership of the solutions we build, which means we flag when implementation decisions have consequences beyond the immediate requirement.

For clients navigating the EU AI Act, this means:

- identifying when a proposed implementation would introduce an AI system into product’s scope, and evaluating alternatives where appropriate;

- raising compliance implications before architectural choices are locked, not after they are deployed;

- supporting technical documentation, risk management, and conformity preparation when AI is the right path forward.

If your product roadmap includes features that could qualify as AI systems, or if you are uncertain whether they already do, we are available to help you assess your exposure and navigate the options.

Talk to our AI specialists about building smart, scalable software for your business.

If You Are at Embedded World 2026

We are attending EW26 through March 12, 2026. If the scenarios above raise questions about your product roadmap or existing device portfolio, reach out. We are available to meet on-site.

Final Takeaway

The EU AI Act is not only for companies that call themselves “AI companies”.

It applies to anyone who places systems on the market with AI functionality, including embedded products.

In embedded projects, the main risk is often not bad intent. It is late detection.

If your current or upcoming products include inference-based features operating in safety, industrial, or health contexts, the time to assess your exposure is now, not when the first conformity question arrives from a customer or regulator.

Legal basis: [Regulation (EU) 2024/1689 – EU Artificial Intelligence Act (Full Text)

See also: [EU AI Act – European Commission Overview]

For a complementary perspective on embedded product compliance under the Cyber Resilience Act, see our earlier article.

![15 Best AI Tools for Java Developers in 2026 [with Internal Survey Results]](https://sam-solutions.com/wp-content/uploads/fly-images/18712/title@2x-6-366x235-c.webp)