The Latest 10 Information Technology Trends in 2026

(If you prefer video content, please watch the concise video summary of this article below)

Key Takeaways

- AI is no longer just software. It’s becoming part of the infrastructure itself, built into robots, edge devices, security platforms, and teams of collaborating AI agents.

- Speed and autonomy now set the pace. Edge computing, 5G, AI-native development, and platform engineering help systems react faster and operate with less human intervention.

- Security and trust are getting smarter. Companies are moving toward preemptive cybersecurity, confidential computing, and blockchain-based provenance to stay ahead of growing risks.

- The leaders invest early and with purpose. They adopt new technologies where it matters most, while keeping sustainability, privacy, and long-term scalability firmly in focus.

The IT world is always on the move: new technologies, tools, and fresh ideas are constantly filling the industry. That’s why it’s crucial to look at the recent trends in information technology that are likely to define our digital life in the near term.

Information technologies develop symbiotically, inevitably influencing each other: a breakthrough in one area stimulates innovations in others. For instance, new findings in artificial intelligence (AI) and machine learning (ML) trigger the creation of more sophisticated mobile applications or IoT systems.

Today’s businesses and social spheres depend on and develop due to advancements in intelligent technologies, robotics, cybersecurity, blockchain, and 5G connectivity, to name a few. Understanding the latest information technology trends can place you a step ahead of the competition.

Develop your custom software with SaM Solutions’ engineers, skilled in the latest tech and well-versed in multiple industries.

1. Advances in Artificial Intelligence and Generative AI/LLMs

Over the past few years, artificial intelligence has dominated tech headlines. And by 2026, it has recalibrated the entire IT landscape. Companies of all sizes have begun to introduce AI solutions into their operations and gain real benefits: more efficient workflows, fewer production issues, better customer service, and higher revenue, to name a few.

Here are some impressive stats:

- Recent projections show the global AI market reached $244 billion in 2025, with continued rapid growth toward well over $800 billion by 2030.

- In the US, about 88% of companies use AI (especially generative AI) in at least one function. (McKinsey)

- AI chatbots handle up to 70% of customer service interactions.

Practical applications of machine learning and artificial intelligence solutions are hard to enumerate. In healthcare, they diagnose diseases, assist in surgeries, and develop personalized treatments (solution example — IBM Watson Health). Retail and ecommerce utilize chatbots and smart product recommendations (solution example — Amazon AI). In the financial sphere, artificial intelligence works hard to detect fraud, forecast stock market tendencies, and automate planning (solution example — Wealthfront robo-advisor).

What truly defines this year, however, is the rise of enterprise-grade generative AI. Organizations are increasingly implementing fine-tuned large language models (LLMs) trained on proprietary data, connected to internal systems via APIs, and governed by strict security and audit controls. AI copilots assist developers, sales teams, HR departments, and more. Not as replacements, but as force multipliers that compress time-to-value and reduce cognitive load.

Today, LLMs draft and review code, generate business documents, summarize meetings, power multilingual customer support, and automate content creation at a scale that was unthinkable just a few years ago.

Geographically, North America remains a leader in AI deployment, but Europe has accelerated sharply thanks to industry-specific AI solutions and regulatory clarity, while Asia continues to push innovation at scale. Manufacturing, healthcare, finance, logistics, and cybersecurity remain the biggest beneficiaries.

2. AI Security Platforms and Preemptive Cybersecurity

- AI security platforms are integrated cybersecurity systems that use machine learning and data analytics to monitor environments continuously, correlate signals across systems, and automate defensive actions. Leading platforms for 2026 include CrowdStrike, Palo Alto Networks, Darktrace, and specialized, emerging vendors like Akto, Lakera, and Prompt Security.

- Preemptive cybersecurity goes a step further: it focuses on predicting and stopping attacks before they materialize, rather than reacting after damage is done. In practice, it’s a transit from legacy “alarm bell” systems to proactive defenses that model potential attack vectors, simulate breach scenarios, and automatically neutralize threats based on predictive signals. These platforms blend machine learning, behavioral analytics, and automated playbooks so security operations centers (SOCs) can reduce mean time to detect and mean time to respond (MTTD/MTTR) — critical KPIs in the era of constantly evolving threats.

In 2026, this shift from reactive defense to predictive protection defines modern security strategy. The urgency is clear. The scale and complexity of cyberthreats have exploded, driven in part by AI-enabled adversaries and automated attack tools.

According to recent industry research, 72% of security decision-makers say risk is higher than ever, with more than half of organizations seeing threat activity weekly, often powered by generative AI and automated methods.

At the same time, AI in cybersecurity is projected to be a rapidly expanding market, with annual spending expected to grow sharply through the rest of the decade.

3. Convergence of AI and Robotics (Physical AI)

Physical AI refers to artificial intelligence systems that can perceive the physical world and act within it through robotic bodies, machines, or autonomous devices. Unlike purely digital artificial intelligence, physical AI connects algorithms to sensors, motors, and real-world environments that turn intelligence into movements and manipulations.

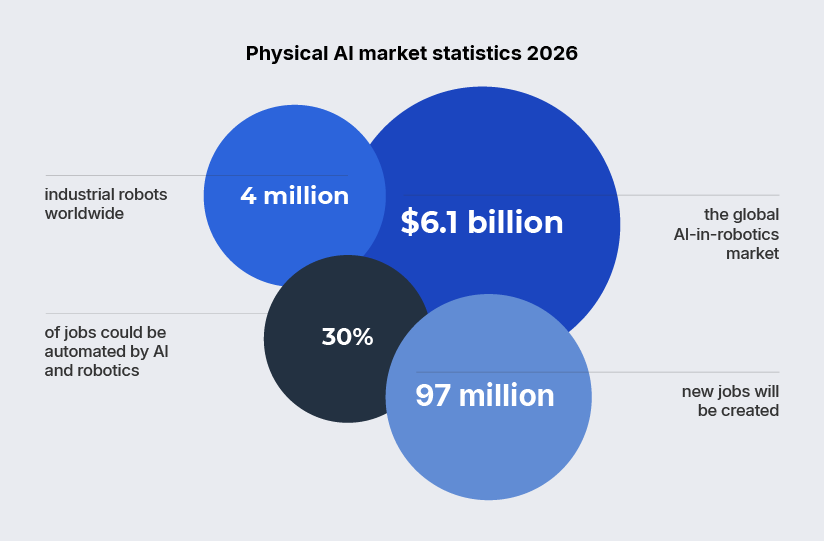

- Estimates by MarketsandMarkets suggest the AI-in-robotics market could grow from about $6.1 billion in 2025 to over $33 billion by 2030, at a compound annual growth rate (CAGR) above 40%, driven by adoption in manufacturing, logistics, and service automation.

- The number of industrial robots in operation worldwide exceeded 4 million units in 2025.

- Studies by PwC and the McKinsey Global Institute indicate that up to 30% of jobs or working hours could be automated by the early 2030s, driven by AI and robotics.

- AI and robotics are expected to create around 97 million new roles between 2025 and 2030, particularly in areas such as robotics maintenance and automation programming.

What’s driving the shift is maturity on both sides. AI models can now interpret visual, spatial, and contextual data in real time, while robotics hardware has become more affordable, precise, and energy-efficient. As a result, we witness a new generation of autonomous systems that can adapt on the fly: robots that navigate dynamic environments, collaborate safely with humans, and learn from experience rather than rigid programming.

- Manufacturing remains the epicenter. AI-powered robots handle complex assembly, quality inspection, and predictive maintenance, adjusting their behavior based on sensor feedback and production data.

- Logistics and warehousing follow closely, where autonomous mobile robots optimize picking, packing, and last-meter delivery.

- In healthcare, physical AI supports assisted surgery, rehabilitation, and hospital logistics.

- In agriculture it enables precision farming, autonomous harvesting, and resource optimization.

4. Edge Computing and 5G Connectivity

Edge computing has been among the top trends in information technology for several years in a row. Regarded as an evolutionary step beyond cloud computing, edge computing places data processing nodes in proximity to both data sources and consumers. This decentralized paradigm of data handling offers lower latency and a faster and more effective means of extracting important information. Real-time operations in healthcare, smart manufacturing, logistics, and other industries reap a lot of benefits from this approach.

- In 2026, the global edge computing market is assessed at around $39.6 billion and is on track for explosive long-term growth, expected to exceed half a trillion dollars by 2035. (Research Nester)

The expansion of the Internet of Things (IoT) ecosystem leads to the escalating volumes of data generated by enterprises, consequently, there is the heightened demand for edge computing devices.

- Analysts expect almost 1,200 network edge data centers globally by 2026, up sharply from just under 250 in the early 2020s. This is the evidence of widespread deployment to support low-latency services and real-time applications. (STL Partners)

Emerging trends in edge computing

5G and edge integration. Faster 5G networks enable ultra-low latency edge applications, improving AR/VR, gaming, and healthcare. A separate analysis by Research Nester suggests the 5G edge cloud network and services market will grow from $7.5 billion in 2025 to about $8.9 billion in 2026, expanding further as enterprise demand for real-time processing rises.

AI at the edge. AI-powered devices, like smart cameras and wearables, analyze data locally for instant decision-making.

Edge security solutions. Companies use zero-trust security models to protect sensitive information processed locally.

Hybrid cloud-edge systems. Businesses use a mix of cloud computing and edge computing to balance efficiency and scalability.

5. AI-Native Development and Platform Engineering

- AI-native development describes a software engineering approach where artificial intelligence is built into the application lifecycle from the very beginning. Not added later as a feature, but treated as a core design assumption.

- Platform engineering is the practice of building internal developer platforms that offer standards for infrastructure, tools, and workflows, enabling teams to deliver software faster and more reliably.

By 2026, these two disciplines have converged into a new operating model for modern IT organizations. The catalyst is scale. As software ecosystems grow more complex, traditional development models buckle under the weight of microservices, clouds, compliance requirements, and constant delivery pressure.

AI-native systems automate code generation, testing, dependency management, and performance optimization — and increasingly support vibe coding, where developers work at a higher level of intent. They guide outcomes through prompts, context, and architectural constraints rather than write every line of code manually. And platform engineering provides the stable foundation (golden paths, self-service infrastructure, and reusable components) on top of which AI can operate effectively.

In practice, developers no longer start with empty repositories. AI copilots scaffold applications, suggest architectures, and surface risks in real time, while internal platforms handle deployment, security policies, and observability behind the scenes. The developer’s role shifts from low-level implementation to orchestration and decision-making, forming the “vibe” of the system while AI handles much of the mechanical execution.

6. Quantum Computing Developments

You may be surprised, but traditional computers are objectively quite slow. As quantum computing is actively maturing now, scientists and tech leaders claim quantum computers to become the backbone of the future digital world.

Quantum computing technology is a way of transmitting and processing information based on the phenomena of quantum mechanics. Traditional computers use binary code (bits) to handle information. The bit has two basic states, zero and one, and can only be in one of them at a time. The quantum computer uses qubits, which are based on the principle of superposition. The qubit also has two basic states: zero and one. However, due to superposition, it can combine values and be in both states at the same time.

Quantum computing principles

- Superposition. A qubit can be 0 and 1 at the same time, enabling massive parallel processing.

- Entanglement. Qubits can be linked together, so changing one qubit instantly affects another, even at a distance.

Parallelism of quantum computing helps find the solution directly, without the necessity to check all the possible variants of the system states. In addition, a quantum computing device doesn’t need huge computational capacity and large amounts of RAM. Imagine: it requires only 100 qubits to calculate a system of 100 particles, whereas a binary system requires trillions of bits.

With quantum computing, it’s much easier to process large sets of information, which is incredibly beneficial for predictive analytics applications. Further development and widespread adoption of the technology are therefore only a matter of time.

| Quantum computing in action | ||

| IBM Quantum | Provides cloud-based quantum computing access for research and business applications. | |

| Google’s Sycamore processor | Achieved “quantum supremacy” by solving a problem in minutes that would take classical computers thousands of years. | |

| D-Wave’s Quantum annealing | Designed specifically to solve complex optimization and sampling problems by finding the lowest energy state in a system. | |

Quantum computing holds great promise in several key areas.

- In climate science, quantum simulations can model climate change scenarios with greater accuracy.

- The field of drug discovery benefits from the quantum simulation of molecules to accelerate the drug development process.

- Quantum algorithms can enhance logistics and supply chain services by optimizing routes for delivery networks.

- In cybersecurity, it’s becoming possible to create virtually unhackable communication systems through quantum encryption.

7. Sustainable Technology and Green IT

Climate change and resource depletion are becoming urgent concerns, that’s why sustainability is no longer an option, but a necessary factor in the technology industry. It’s the current generation’s responsibility to meet present needs without compromising the ability of future generations to meet their own needs.

A study revealed that sustainable IT practices could reduce global carbon emissions by up to 30% by 2030, which makes sustainability one of the top trends for environmentally conscious companies.

- Tech giants are now investing in renewable energy sources to power their data centers.

- Smart cities implement green buildings and electric transport, as well as employ AI-driven solutions to optimize energy use and minimize waste.

- Sustainable supply chains adopt eco-friendly shipping methods, biodegradable packaging, and recycled materials.

- Sustainable farming uses precision irrigation, organic farming, and AI-driven crop management.

The list can go on, proving that much effort is being spent in this area.

8. Multiagent AI Systems

A fast-growing branch of artificial intelligence is multi-agent AI systems. These are architectures where multiple autonomous AI agents collaborate and coordinate to achieve common goals. Unlike single, isolated AI models, multi-agent systems operate as teams. Each agent has its own role, context, and decision logic. The system as a whole adapts dynamically, much like a group of human specialists working together.

If traditional AI follows predefined instructions and early agentic AI focuses on individual autonomy, multi-agent AI introduces collective intelligence. These systems can distribute tasks, plan in parallel, resolve conflicts, and optimize outcomes across complex environments. At their core are large language models, reinforcement learning, machine learning, and natural language processing (NLP), combined with orchestration layers that manage inter-agent communication.

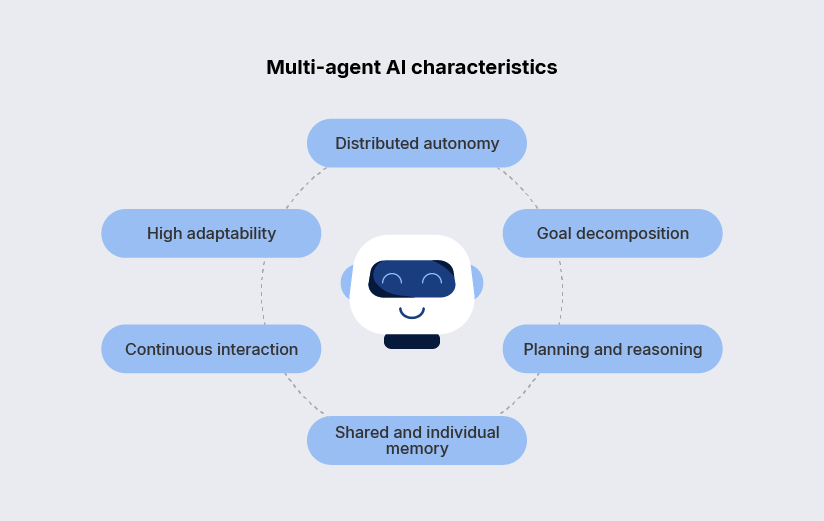

Key characteristics of multi-agent AI systems

- Distributed autonomy. Multiple agents act independently but coordinate decisions without constant human oversight.

- Goal decomposition. Complex objectives are broken into subtasks, assigned to specialized agents, and recombined into coherent results.

- Planning and reasoning. Agents anticipate dependencies, handle uncertainties, and adjust plans collectively as conditions change.

- Shared and individual memory. Each agent learns from experience, while shared context enables system-wide improvement over time.

- Continuous interaction. Agents communicate with humans, other agents, and external systems in real time.

- High adaptability. The system evolves dynamically as agents learn, fail, recover, and optimize strategies together.

9. Confidential Computing and Data Privacy

Confidential computing is a security model that protects data while it is being processed, not just at rest or in transit. It relies on hardware-based trusted execution environments (TEEs), which keep sensitive workloads encrypted in memory and isolated from the operating system, cloud provider, and administrators. By 2026, this approach has become a practical response to growing privacy risks in cloud and intelligent systems.

The driver is simple: organizations need to process highly sensitive data in shared, cloud-based environments and not to lose control or violate regulations. Confidential computing makes this possible through secure analytics, AI training, and data sharing without exposing raw data. It is particularly relevant for finance, healthcare, government, and any workload that touches personal or regulated information.

Major cloud and hardware vendors now offer mature solutions in this space, including Intel SGX, AMD SEV, Azure Confidential Computing, and Google Confidential Computing. These platforms integrate directly with modern cloud stacks, making privacy-by-design achievable at scale.

10. Blockchain and Digital Provenance

While blockchain is commonly associated with cryptocurrencies, the technology has already entered diverse fields that require decentralized data storage and transparent transactions. One of its most important emerging roles is digital provenance, i.e. the ability to verify the origin, history, and authenticity of digital and physical assets across their entire lifecycle.

Despite being utilized by only 4% of the global population, blockchain boasts a market value reaching into the billions. In 2026, the blockchain market is firmly on a steep growth trajectory, with long-term forecasts pointing toward a valuation of nearly $250 billion by 2029.

An illustration is supply chain governance: blockchain helps track the delivery of products from the production site to end users, recording every handoff along the way. This creates a trusted provenance trail that virtually eliminates the possibility of falsifications across multiple stages, including financial transactions, warehousing, inventory records, and delivery schedules. The same principle increasingly applies to digital assets, where blockchain verifies who created a file, how it was modified, and whether it can be trusted — a capability growing in importance in the age of AI-generated content.

Why Choose SaM Solutions As Your Information Technology Partner?

With over 30 years of experience in the software engineering market and a steadfast commitment to excellence, SaM Solutions offers a wealth of expertise and a proven track record in delivering cutting-edge IT solutions. We look toward the technological future, ready to support your digital transformation strategies and ideas.

Our team of professionals, including mobile developers, IoT and embedded experts, and QA engineers, ensures the seamless integration of innovative technologies, propelling your business to new heights. At SaM Solutions, client success is paramount, and we pride ourselves on fostering long-term partnerships built on trust, transparency, agility, and technical proficiency.

Final Thoughts

This article discussed the leading trends in information technology that together showcase the technological future of mankind. The road ahead promises hitherto unheard-of breakthroughs, and those who grab the chances given by these transforming technologies will surely set a path for continuous prosperity.

FAQ

What are the most significant information technology trends to expect in 2026?

The current trends in the information technology industry include the widespread adoption of generative AI and large language models, the rise of AI-driven and preemptive cybersecurity, and the convergence of AI with robotics (Physical AI). Edge computing combined with 5G enables real-time, low-latency applications, while AI-native development and platform engineering transform how software is built. Other key trends shaping 2026 are advances in quantum computing, sustainable and green IT, multi-agent AI systems, confidential computing for stronger data privacy, and blockchain-based digital provenance for trusted data and asset verification.