AI-Assisted Software Development: The Ultimate Guide to Engineering Productivity

(If you prefer video content, please watch the concise video summary of this article below)

Key Facts

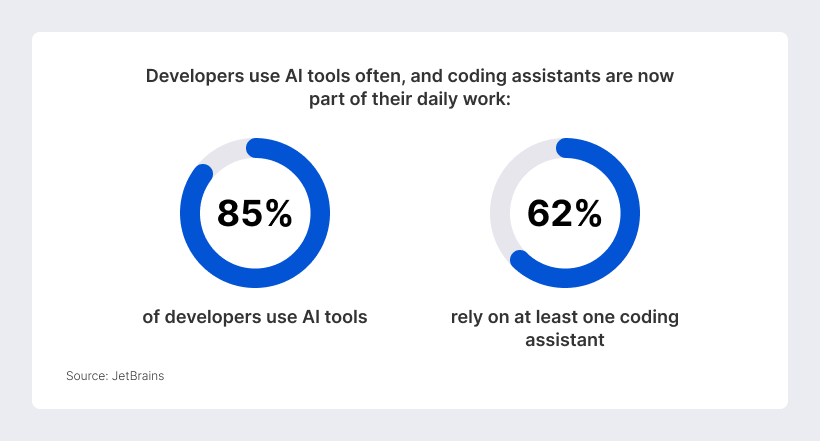

- AI is now part of mainstream software development: 85% of developers regularly use AI tools for coding and development, while 62% rely on at least one AI coding assistant, agent, or AI-powered editor.

- AI improves productivity only when engineering processes are mature: Teams gain the most value when AI is supported by clear specifications, strong testing, secure review workflows, and reliable CI/CD pipelines.

- The developer role is shifting from manual coding to oversight: Engineers increasingly focus on defining intent, validating output, managing architecture, reviewing risks, and ensuring long-term system coherence.

- AI delivers the strongest impact in structured SDLC tasks: Code generation, boilerplate reduction, test creation, documentation, refactoring, and maintenance are among the most practical and measurable use cases.

- Human-in-the-loop validation remains essential: AI-generated code must still pass automated checks, security scans, regression tests, architectural review, and final human approval before production use.

AI-assisted software development has entered a new phase. As of 2026, AI support is no longer a novelty layered on top of existing practices; it is becoming part of the normal development stack.

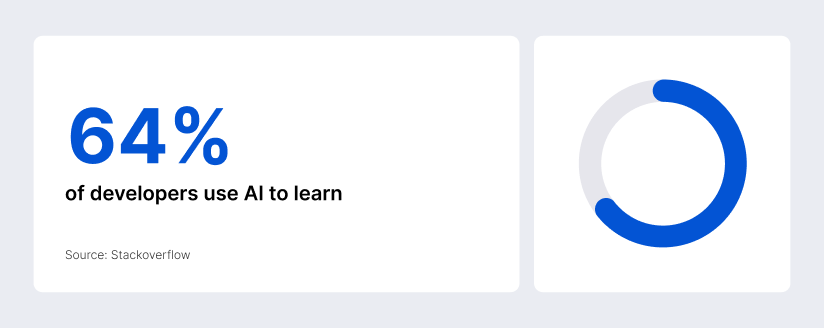

JetBrains reported that 85% of developers regularly use AI tools for coding and development, and 62% rely on at least one AI coding assistant, agent, or code editor. According to Stackoverflow, 64% of developers use AI to learn.

That does not mean every engineering team is automatically faster. The more important lesson is that AI behaves like an amplifier, not a miracle. Organizations with good platforms, strong feedback loops, and clear policies capture the upside, while teams with bottlenecks in review, testing, security, and release management often just move the bottleneck downstream.

Understanding the Paradigm Shift in Modern Engineering

The strategic shift is not simply “developers code faster.” It is that the center of value is moving away from typing syntax and toward defining intent, constraining behavior, validating output, and maintaining system coherence. The most successful teams are rethinking role design, SDLC checkpoints, and platform responsibilities at the same time.

Defining the AI-augmented developer role

The modern developer is gradually becoming more like a spec author, reviewer, systems thinker, and execution supervisor. GenAI’s strongest impact is in design, implementation, testing, and documentation, while higher-value work shifts toward specification quality, architectural reasoning, and oversight. AI is most useful when humans stay accountable for judgment, priorities, and governance.

From manual coding to intent-based programming

Intent-based programming does not mean abandoning code. It means expressing desired outcomes, constraints, interfaces, edge cases, and validation rules in natural language first, then using the model to generate a first draft that conforms to those requirements. That is why the best teams increasingly treat prompts like executable design briefs. The model needs acceptance criteria, non-goals, interface boundaries, migration limits, and verification steps. Without that structure, “vibe coding” drifts toward architecture erosion and hidden maintenance costs; with it, AI becomes a high-leverage drafting and execution layer.

The evolution of the software development lifecycle

The SDLC itself is becoming more asymmetric. Early phases, such as planning and requirements analysis, still show lower perceived gains, while implementation, testing, documentation, and maintenance show much stronger returns.

If teams only optimize code generation, they improve the least constrained stage of the pipeline. Gains in coding speed can disappear into testing, security review, or release friction unless the whole delivery system evolves with the tools.

Ready to implement AI into your digital strategy? Let SaM Solutions guide your journey.

Core Applications Across the SDLC

The real question is not whether AI belongs in the SDLC. It already does. The better question is where it creates durable value, where it mostly saves time on low-complexity work, and where human review remains non-negotiable.

Advanced Strategies for Effective Implementation

Most organizations already know how to buy AI seats. What separates strong outcomes from disappointing pilots is operating discipline: prompt structure, context grounding, validation design, and clear ownership boundaries.

For engineering work, the most dependable prompting pattern is decomposition. Complex tasks should be broken into multiple smaller tasks, as smaller, focused steps are easier for the model to test and for developers to review. That is the practical interpretation of “chain-of-thought prompting” for production teams. You do not need theatrical, page-long reasoning dumps in the UI. What you need is a prompt that asks for a plan, specifies constraints, includes examples, defines what must not change, and forces verification after each milestone.

RAG becomes essential the moment the work depends on internal conventions, decision records, service boundaries, or business rules. Siloed or low-quality data is a major blocker because AI connected to bad data simply produces bad answers faster. For engineering leaders, the implication is straightforward: do not only deploy a model; deploy a context strategy. High-value retrieval sources usually include architecture decision records, coding standards, API contracts, runbooks, data definitions, support taxonomies, and prior postmortems.

Human oversight is not a concession to weak tooling. It is the normal control system for a high-autonomy environment. Google’s multi-agent guidance says business-critical agentic systems should include a human-in-the-loop flow so supervisors can monitor, override, and pause agents.

A workable validation framework usually has three checkpoints: first, a spec or issue review before generation; second, automated validation in a sandbox through tests, linters, scanners, and policy checks; third, human approval before merge or deployment.

Measuring Impact and ROI

If AI changes the economics of engineering, leaders need a measurement system that does not confuse activity with value.

Engineering metrics: velocity vs. code quality

Metrics that only measure output, like the number of lines of accepted code, are an ineffective way to measure productivity since AI could simply increase the quantity of output without providing any tangible delivery or value to the product. It would be more effective to adopt a more holistic approach in measuring output by incorporating factors of speed, simplicity, and quality.

| Measurement layer | What to track | Why it matters |

|---|---|---|

| Flow | Lead time for changes, review turnaround, batch size | AI often speeds code generation, but value is lost if review, testing, or release stages remain slow |

| Stability | Change failure rate, rollback rate, escaped defects | Faster code is not better if it raises incident frequency or fragility |

| Code quality | Test pass rate, static analysis findings, security findings, refactor churn | AI suggestions must be measured by maintainability and correctness, not just acceptance rate |

| DevEx | Self-reported speed, ease, focus time, cognitive load | Automated telemetry misses what it feels like to build inside the system |

| Business value | Feature adoption, conversion, retention, customer satisfaction | Shipping more code is meaningless if user outcomes do not improve |

Developer experience and cognitive load reduction

One of the biggest underappreciated benefits of AI is not speed in isolation; it is reduced context-switching and lower cognitive overhead when the surrounding platform is good enough. High-quality internal platforms make AI adoption meaningfully positive, while low-quality platforms erase the gains. If you “shift down” complexity into the platform, developers do not have to become temporary experts in infra, networking, or compliance for every task.

The AI measurement framework for engineering leaders

The strongest measurement programs now combine several views instead of searching for one perfect score. Choose the “why” first and then select metrics from frameworks such as SPACE, DevEx, H.E.A.R.T., or DORA, depending on whether your goal is developer experience, product excellence, or organizational effectiveness. ROI varies sharply by task type, codebase familiarity, validation overhead, and workflow maturity.

Strategic Tooling and Infrastructure

Tool choice matters, but infrastructure maturity matters more. The best AI assistant for software developers will still disappoint if it lacks access to the right context, validation hooks, and organizational guardrails.

An effective AI assistant for software developers should improve everyday efficiency without weakening code quality, architectural consistency, or long-term scalability. For more complex tasks, teams still need human review of the underlying algorithm, system behavior, and deployment risks.

Comparing integrated development environment extensions

Let’s explore the most mature available enterprise options.

| Tool | Best fit | Native strengths | Governance note |

|---|---|---|---|

| GitHub Copilot | Teams already centered on GitHub workflows | Cloud agent, code review, strong pull-request integration, GitHub Actions automation, MCP support | Built-in public-code matching checks help, but GitHub still recommends testing, IP scanning, and security review |

| JetBrains AI Assistant | JetBrains-heavy engineering organizations | Context-aware IDE chat, in-editor actions, coding agents, local and third-party model support | Strong for teams that want AI embedded directly inside the IDE workflow |

| Amazon Q Developer | AWS-centric teams and platform-heavy backlogs | AWS-aware chat, security scanning, optimization, refactoring, upgrade, and transform workflows | Free tier content may be used for service improvement or training; Pro and Business content is not |

| Gemini Code Assist | Google Cloud users and mixed-language teams | Code completions, function generation, unit tests, debugging help, and source citations | Google says prompts and responses are not used to train underlying models, but all output still needs validation |

Integrating AI into CI/CD pipelines

The next level of value comes when AI leaves the chat window and enters the delivery system. In practical terms, CI/CD integration works best for the generation of tests, summarization of failures, fix suggestions, release-note drafting, config scaffolding, dependency updates, review automation, and documentation refreshes. It works worst when teams let AI produce large unreviewed batches or treat the pipeline as a ceremonial final step rather than an active validation environment.

Security and compliance in AI-generated code

The data-governance side varies materially by vendor and plan. Google states that Gemini for Google Cloud does not use prompts or generated responses to train or fine-tune underlying models. AWS says Amazon Q Developer Free tier content may be used for service improvement or training, while Pro and Business content is not.

The European Commission says the AI Act entered into force on August 1, 2024, and will be fully applicable on August 2, 2026, with some provisions applying earlier; obligations for providers of GPAI models started applying on August 2, 2025. That means organizations should already be lining up policy, governance, and documentation practices rather than waiting for a last-minute compliance scramble.

Overcoming Adoption Challenges

The friction points are no longer hard to identify. Most teams run into the same cluster of issues: hallucinations, uneven logic, security and IP concerns, shaky trust, and cultural resistance. The teams that progress are the ones that acknowledge those risks early and design around them.

The Future of AI in Software Engineering

The next wave is already visible: more autonomy, more orchestration, more context plumbing, and more movement from code generation toward system-level execution. But the future is unlikely to be fully autonomous or fully no-code. It is shaping up as a hybrid model where humans define intent and governance while AI handles more of the execution surface.

Autonomous agents and self-healing codebases

Agentic tools are evolving quickly. GitHub’s cloud agent can work independently in the background on research, planning, coding, coverage, and documentation tasks. It is described as an agentic coding system that reads the codebase, makes changes across files, runs tests, and delivers committed code. GitHub’s own product material also uses the phrase “self-healing capabilities” for agent mode when analyzing runtime errors.

The rise of no-code/low-code for professional developers

Low-code and no-code are no longer just “citizen developer” tools. Gartner’s 2025 software-engineering trends release predicts that by 2028, 90% of enterprise software engineers will use AI code assistants and that the developer role will shift from implementation toward orchestration, system design, and quality control.

For professional developers, this does not reduce relevance. It changes where expertise gets applied. The winners will be the teams that standardize guardrails, APIs, policies, reusable components, and platform primitives so that faster app creation does not produce a long tail of ungoverned internal tools.

Why Choose SaM Solutions for AI-Assisted Software Development?

AI-assisted software development delivers value only when it is connected to real engineering discipline: architecture, clean delivery processes, testing, security, and long-term maintainability. SaM Solutions brings these pieces together through custom software development, IT consulting, solution architecture, cloud, AI and data, QA, DevOps, and legacy modernization services.

For organizations adopting AI in software engineering, this matters because productivity gains depend on more than code generation. SaM Solutions can help teams identify where AI fits into the SDLC, build AI-enabled applications, modernize existing systems, integrate intelligent automation, and create validation workflows that keep output reliable and secure.

With over three decades on the market, 1,000+ completed projects, 800+ IT experts, and global delivery experience, we are well-positioned to support companies that want to move from AI experiments to production-ready engineering practices.

Need expert guidance on designing and implementing AI solutions for your business?

Conclusion

The most productive engineering teams are not the teams with the loudest AI story. They are the teams that have learned to turn AI into a disciplined part of the delivery system. If there is one idea to carry forward, it is this: AI is strongest when it is paired with strong specs, healthy context pipelines, fast feedback, measurable quality controls, and a platform that reduces cognitive load. That is what turns engineering productivity from a demo effect into an operating advantage.